A nice theory is one side of the medal, however, in day to day practice there are much more

hurdles to jump over, before accurate and reliable results are obtained.

The following, only seemingly exotic, example shows, what that means. It asks the question:

How accurate are precise data?

Observations:

I have analyzed the raw data of three TRITON generated data files of approx. the same lenght (~

11.5 h) of an Ames Nd standard sample. Data acquisition parameters are said to be identical.

Files:

F4 and F12 obtained from J. Schwieters (Thermo, Bremen)

P12 obtained from G. Caro (IPG, Paris)

The following figure shows a plot of the 144/146Nd raw ratios for these three files:

F4:

F12:

P12:

F12:

P12:

Using the La Jolla standard value (RN = R144/146 = 1.3852), one observes a remarkable static offset of the measured raw ratio r144/146 for all three files:

Time scale is in [sec]: The total lenght of the runs is ~11.5 hours each.

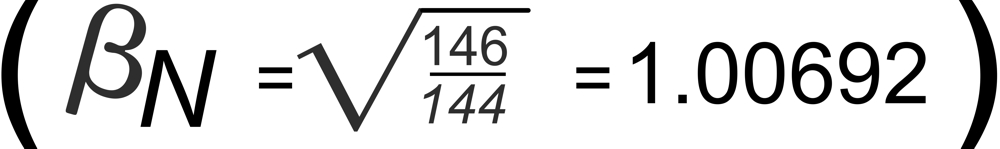

The (questionable) explicit correction of raw ratios before the application of fractionation correction formulae, as shown in this note:

)

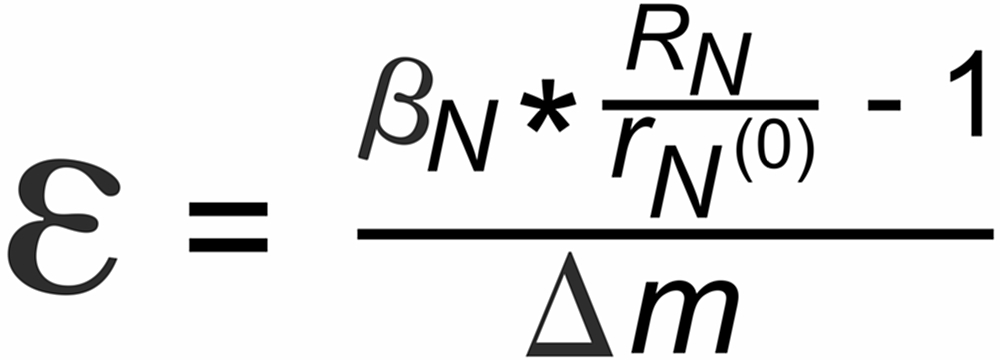

This static offsett is defined as

F4: Red

F12: Blue

P12: Green

(block means only)

3.917 0/00

3.852 0/00

2.477 0/00

3.852 0/00

2.477 0/00

per mass unit

rN(0) is the raw ratio of the i144 and i146 ion currents at the very beginning of the data acquisition. The question is:

Has this offset any influence on the fractionation corrected "true" ratio?

I will offer two answers:

The so-to-speak "automatic" (or implicit) correction of (mass dependent) static discriminations as a (favorable) side effect of fractionation correction, as shown in this section:

Has this offset any influence on the fractionation corrected "true" ratio?

I will offer two answers:

The so-to-speak "automatic" (or implicit) correction of (mass dependent) static discriminations as a (favorable) side effect of fractionation correction, as shown in this section:

Summary:

High precision runs seem to require a lot more checks and still not really proven correction methods, before their high precision may result in serious high accuracy results.

(July 2004)

High precision runs seem to require a lot more checks and still not really proven correction methods, before their high precision may result in serious high accuracy results.

(July 2004)

Dr. Karleugen Habfast: Theory of Fractionation Correction - Practice vs Theory - Theory in a real world